Hi, I am Zihang. I am a researcher at UC Berkeley and International Computer Science Institute, advised by Prof. Michael Mahoney. I also have the privilege to work closely with Prof. Yaoqing Yang from Dartmouth College and Shiwei Liu from MPI-IS. I previously obtained my Master’s degree in EECS at UC Berkeley.

My research focus is to understand and improve the transparency and efficiency of learning models. I am particularly interested in:

- Understanding the mechanisms and behaviors of AI systems, such as generalization, uncertainty, and emergent abilities of frontier models, and using principled approaches to improve the transparency and efficiency.

- Leveraging geometric (spectral) principles to develop new (numerical) algorithms and frameworks for training large-scale AI systems and solving numerical problems.

🔥 News

- 2026.05: Our paper “Spectral Signature of Large Language Models” has been accepted at KDD 2026.

- 2026.04: Two papers accepted at ICML 2026: RMNP and RL4RLA.

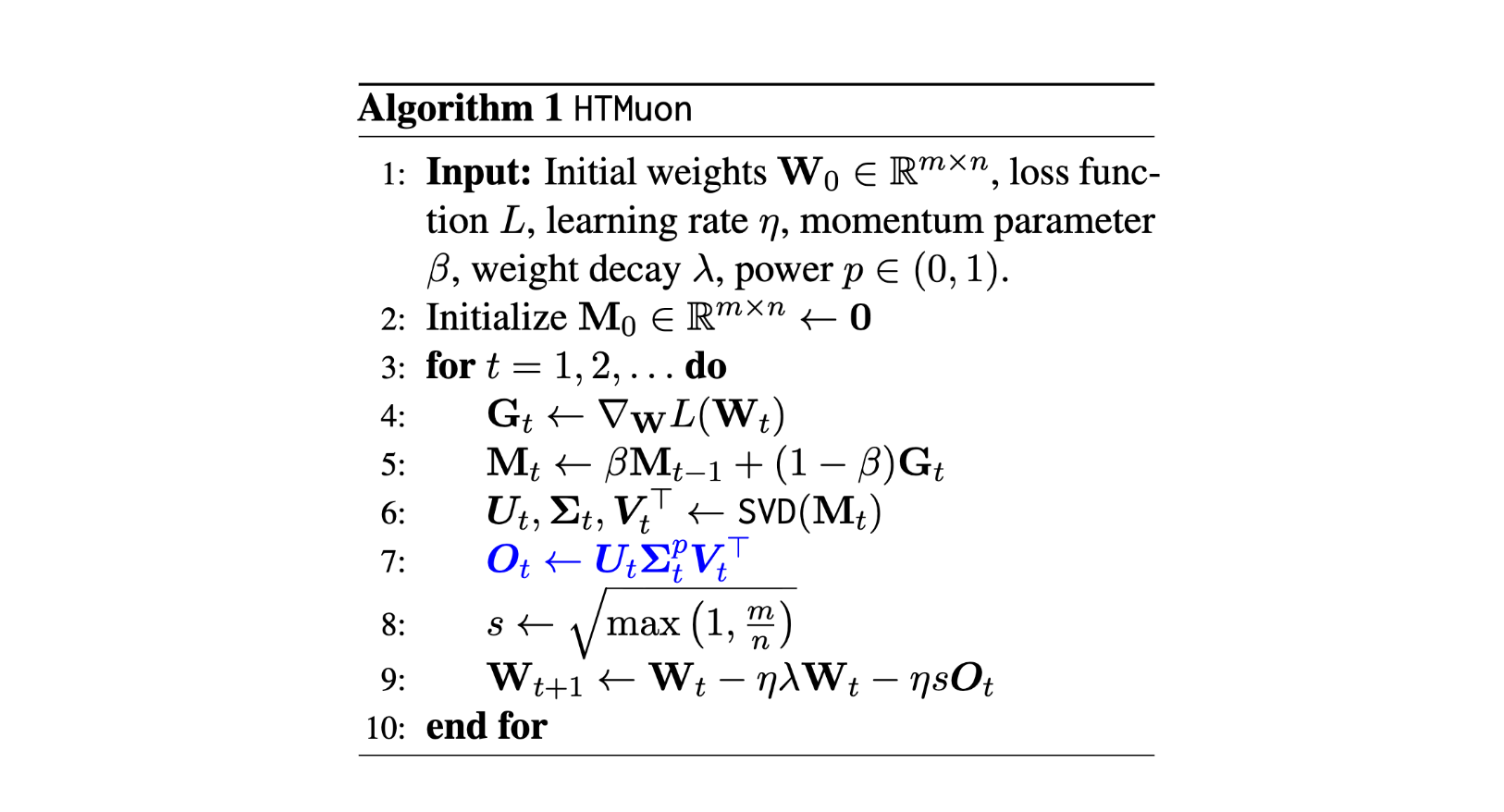

- 2026.04: HTMuon is accepted at ACL 2026.

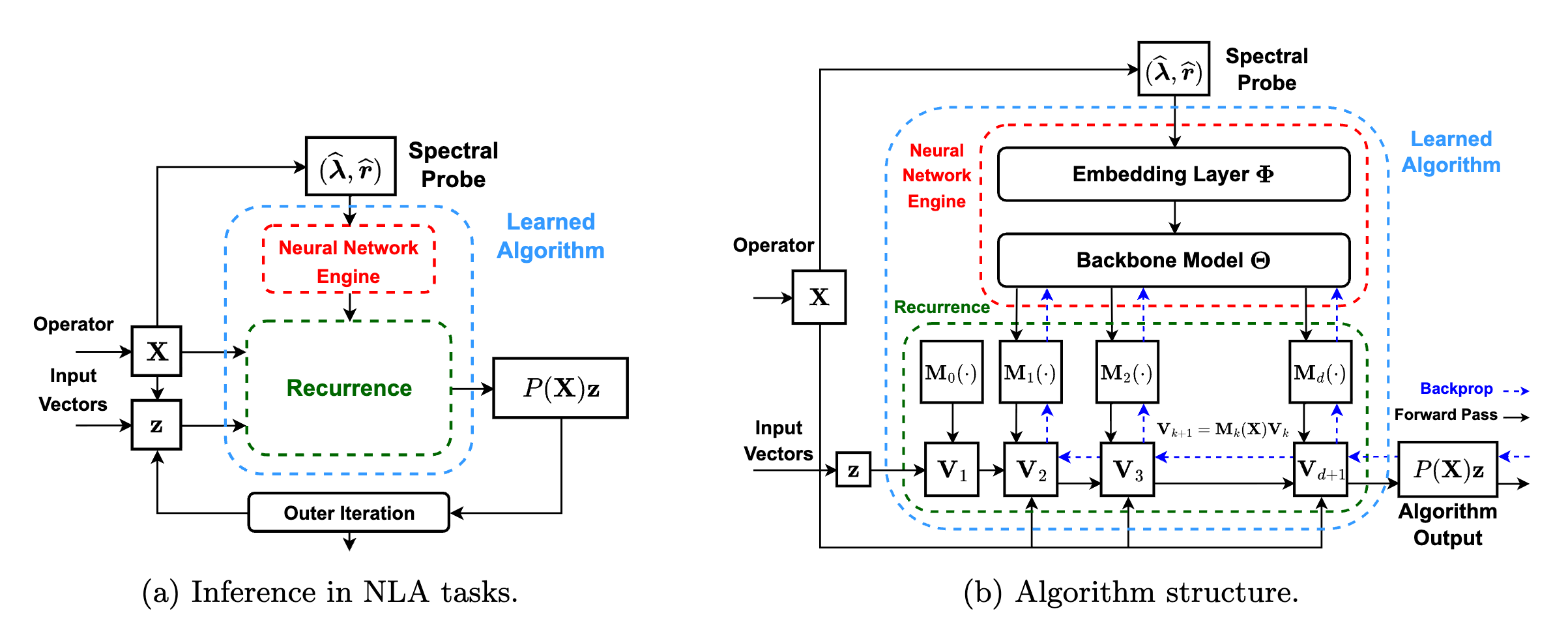

- 2026.02: Excited to share our recent works bridging spectral analysis and ML: AutoSpec – a neural network framework to discover iterative spectral algorithms for NLA and optimization; HTMuon – improving Muon via heavy-tailed spectral correction.

- 2025.06: Started my research engineer position at ICSI to work on numerical algorithms and deep learning.

- 2025.05: Our paper “Principal Weights Emerge after Rank Reduction for Reasoning-Focused Supervised Fine-Tuning” has been accepted to ICML 2025.

- 2024.11: Gave a presentation at EMNLP 2024 on foundation model diagnosis, check out the live recording here.

- 2024.09: Excited to share that our work “Model Balancing Helps Low-data Training and Fine-tuning” is accepted by EMNLP 2024 as Oral Presentation.

📝 Selected Publications

Learning to Discover Iterative Spectral Algorithms

Zihang Liu*, Oleg Balabanov*, Yaoqing Yang, Michael W. Mahoney

arXiv preprint

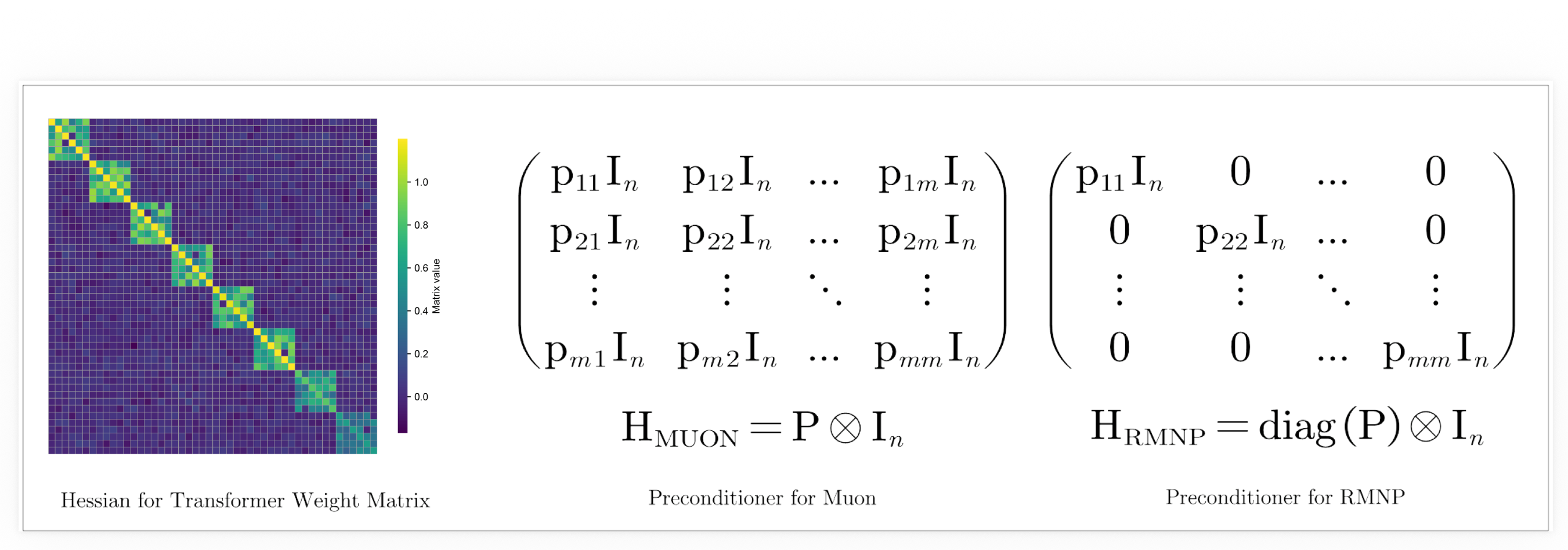

RMNP: Row-Momentum Normalized Preconditioning for Scalable Matrix-Based Optimization

Shenyang Deng*, Zhuoli Ouyang*, Tianyu Pang, Zihang Liu, Ruochen Jin, Shuhua Yu, Yaoqing Yang

ICML 2026

HTMuon: Improving Muon via Heavy-Tailed Spectral Correction

Tianyu Pang*, Yujie Fang*, Zihang Liu, Shenyang Deng, Lei Hsiung, Shuhua Yu, Yaoqing Yang

ACL 2026 Findings

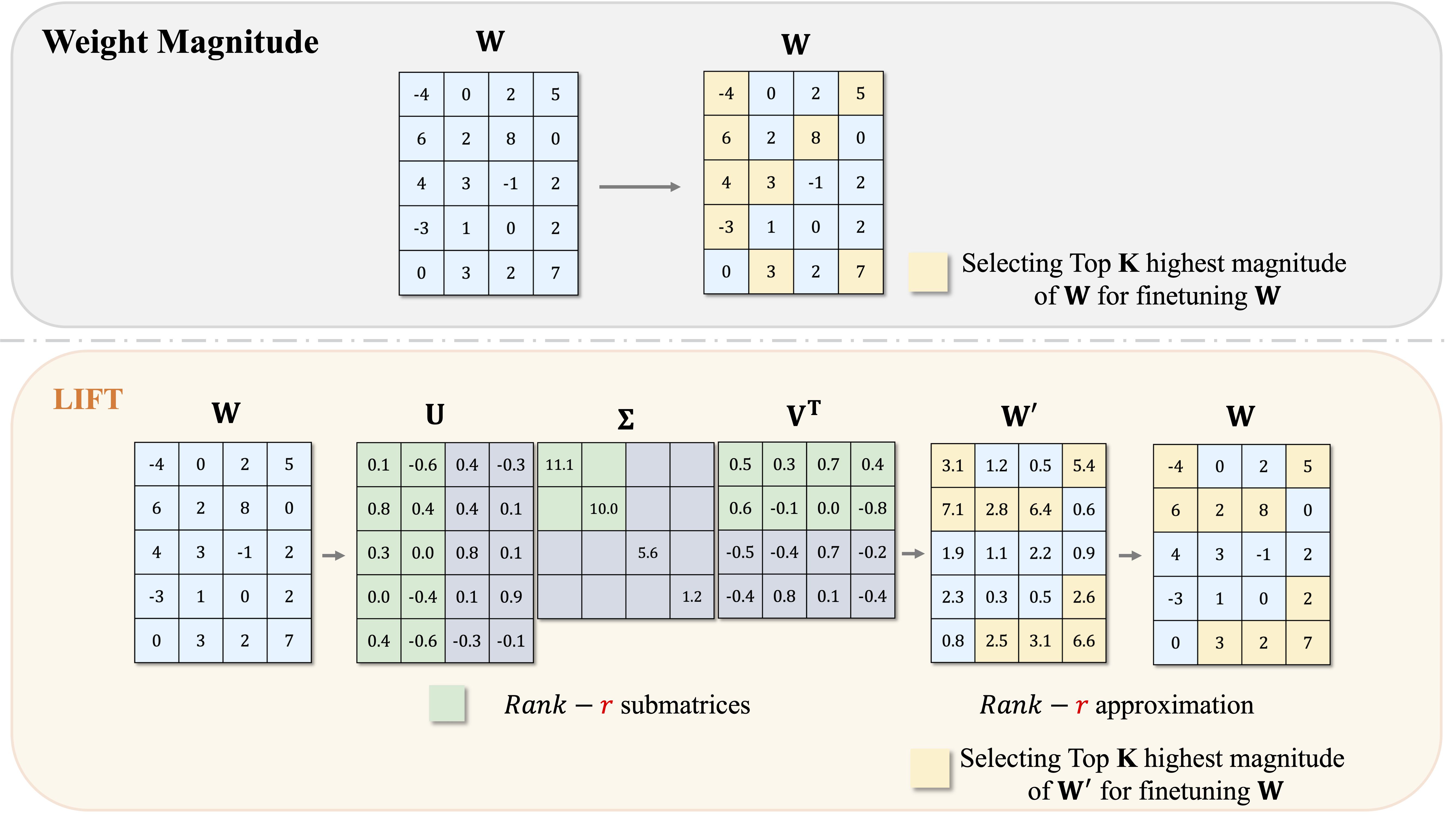

LIFT the Veil for the Truth: Principal Weights Emerge after Rank Reduction for Reasoning-Focused Supervised Fine-Tuning

Zihang Liu, Tianyu Pang, Oleg Balabanov, Chaoqun Yang, Tianjin Huang, Lu Yin, Yaoqing Yang, Shiwei Liu

ICML 2025

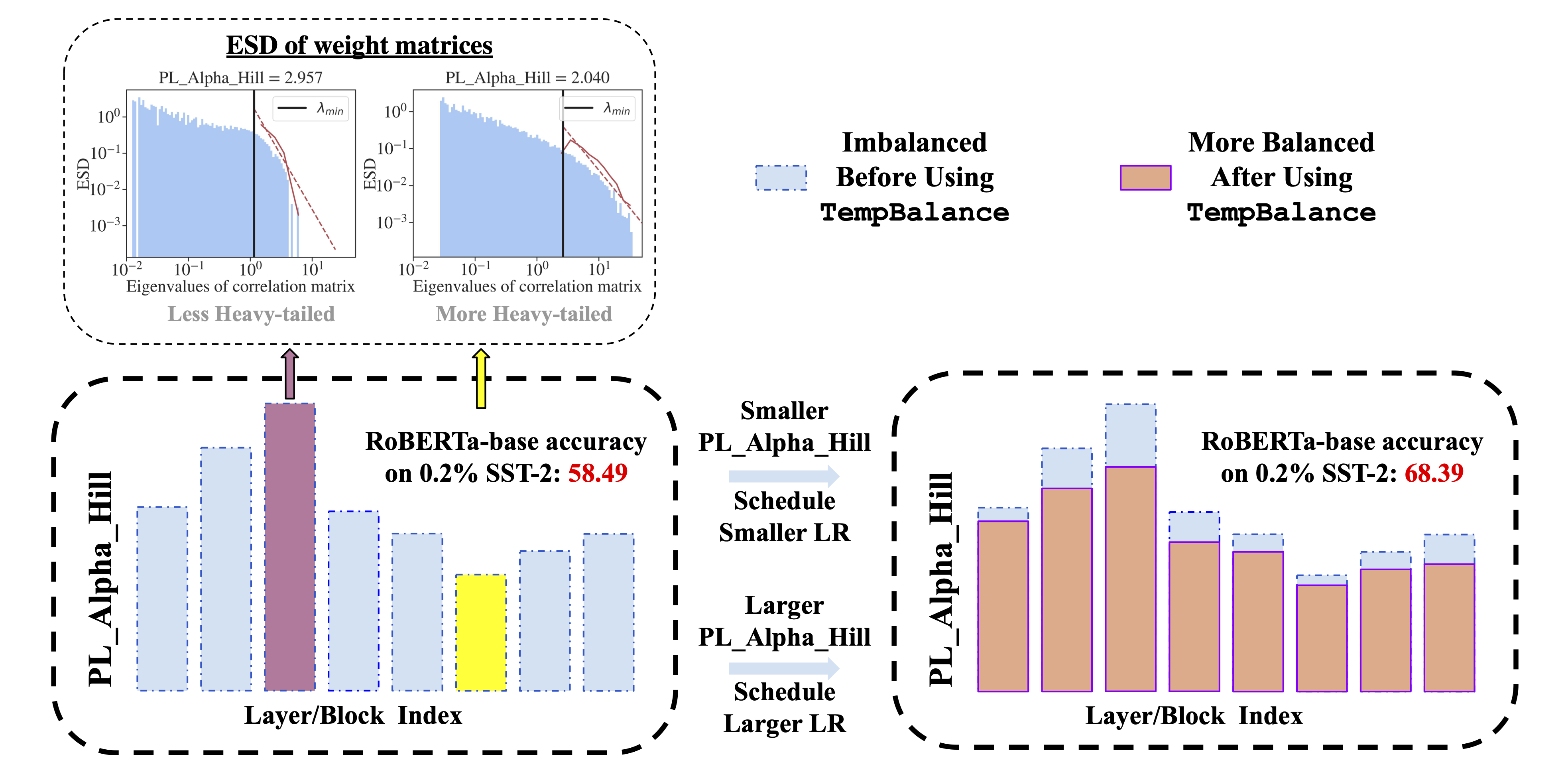

Model Balancing Helps Low-data Training and Fine-tuning

Zihang Liu*, Yuanzhe Hu*, Tianyu Pang, Yefan Zhou, Pu Ren, Yaoqing Yang

EMNLP 2024 Oral